The project goal is to build a robotic vision system that mimics human eye motions. The research could lead to an enhanced vision system able to achieve quick scanning capability (saccadic eye motion) and smooth object following capability (smooth-pursuit) with minimal computational overhead while using inexpensive generic hardware. Current state-of-the-art systems require significant computer post-processing and expensive hardware.

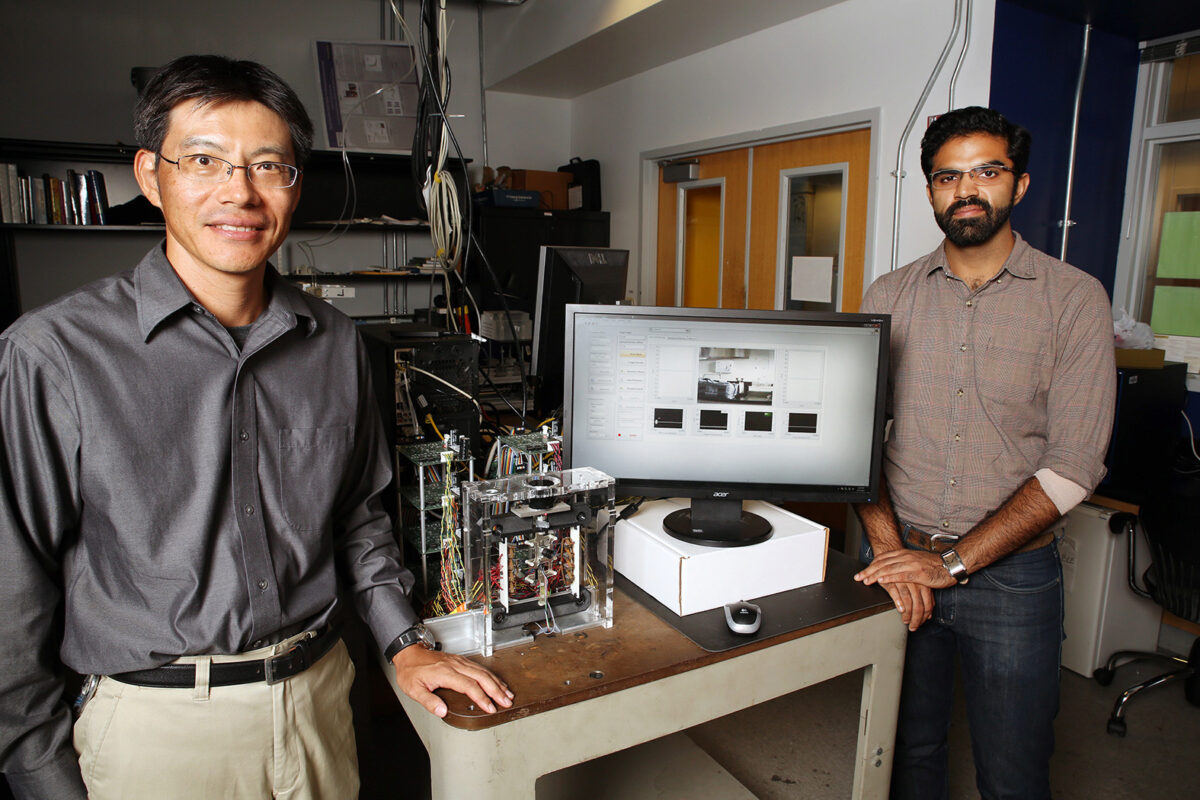

Dr. Ueda's new NSF project will pave the way for a novel camera positioning system (hardware) and real-time vision (software) system that does not require any post-processing and produces ready-to-use images in real-time.

While previous studies focused solely on either mechanical design or image processing, Dr. Ueda’s research merges the areas of system dynamic and image processing. In coordination with inherently discrete and rapid ocular movements, the developed image processing methods inspired by ocular physiology mimic saccades and smooth-pursuit in a fast-moving robotic eye. The employment of a piezoelectrically driven robotic camera positioning mechanism will demonstrate the effectiveness of this ocular physiology-inspired approach.